Computational Photography | Vibepedia

Computational photography encompasses techniques that enhance camera capabilities, enable entirely new features, and reduce the physical size and cost of…

Contents

Overview

The seeds of computational photography were sown in the early days of digital imaging, but its true genesis can be traced to the late 20th century with advancements in digital signal processing and computer vision. Early pioneers explored ways to overcome the inherent limitations of nascent digital sensors and optics. The concept gained significant traction with the development of digital cameras. Key precursors include early work on image mosaicking for panoramic views and HDR imaging techniques developed in academic labs like the [[stanford-university|Stanford University]] Graphics Lab. The integration of these ideas into consumer devices, particularly mobile phones, marked a pivotal moment, transforming photography from a purely optical process into a hybrid of optics and sophisticated algorithms.

⚙️ How It Works

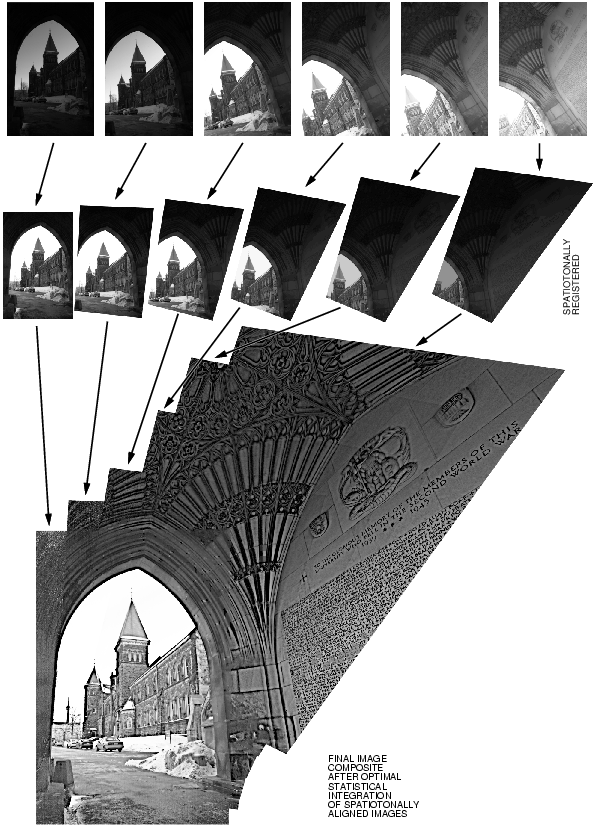

At its core, computational photography replaces or augments optical processes with digital computation. Instead of a single lens forming a perfect image, multiple exposures might be taken and combined (as in [[high-dynamic-range-imaging|HDR]]) to capture a wider range of light. Algorithms analyze scene depth, often using multiple camera lenses or specialized sensors like [[lidar|LiDAR]], to simulate the shallow depth of field characteristic of traditional DSLRs, a technique popularized by [[apple-inc|Apple's]] [[iphone|iPhone]] portrait mode. For low-light conditions, images are captured over longer periods or with multiple frames, then denoised and sharpened using machine learning models trained on vast datasets. Light field cameras, a more specialized application, capture not just the intensity and color of light but also its direction, allowing for post-capture refocusing and 3D scene reconstruction. This computational approach allows for features like super-resolution zoom, object recognition for scene optimization, and real-time image stabilization that far surpass purely optical solutions.

📊 Key Facts & Numbers

Key figures driving computational photography include [[ren-ng|Ren Ng]], founder of [[lytro-inc|Lytro]], who pioneered light field imaging, and [[frédo-щается-روح-дуран|Frédo Durand]], a professor at [[mit|MIT]] whose work on image processing and computational imaging has been highly influential. [[Qualcomm-inc|Qualcomm]] and [[mediatek-inc|MediaTek]] design mobile chipsets with dedicated neural processing units essential for running complex image algorithms. Academic institutions such as [[stanford-university|Stanford University]], [[mit|MIT]], and [[carnegie-mellon-university|Carnegie Mellon University]] continue to be hubs for foundational research, pushing the theoretical boundaries of what computational photography can achieve. The [[siggraph|SIGGRAPH]] conference and the [[ieee-conference-on-computer-vision-and-pattern-recognition|CVPR]] conference are key venues for presenting cutting-edge research in this domain.

👥 Key People & Organizations

Computational photography has fundamentally reshaped visual culture and the accessibility of high-quality imagery. The ubiquity of advanced camera capabilities in smartphones, driven by computational techniques, has democratized photography, enabling billions of people to capture professional-looking images with ease. This has led to an explosion of user-generated content across platforms like [[instagram-com|Instagram]], [[tiktok-com|TikTok]], and [[flickr-com|Flickr]]. The ability to manipulate depth of field and capture stunning low-light shots has influenced artistic expression and visual storytelling. Furthermore, computational photography has blurred the lines between captured reality and digital synthesis, prompting discussions about authenticity and the nature of photographic evidence. The aesthetic trends in online media, from the polished look of influencer content to the dramatic lighting in short-form videos, are heavily indebted to these imaging technologies.

🌍 Cultural Impact & Influence

The current state of computational photography is characterized by rapid integration of artificial intelligence and machine learning. Companies are moving beyond pre-defined algorithms to dynamic, AI-driven image processing that adapts in real-time to scene content. Google's [[magic-eraser|Magic Eraser]] for removing unwanted objects and Apple's [[photonic-engine|Photonic Engine]] for enhanced low-light performance are prime examples of this trend. The development of more powerful mobile processors with dedicated AI cores, such as [[qualcomm-snapdragon-8-gen-3|Qualcomm's Snapdragon 8 Gen 3]] and [[apple-a17-pro|Apple's A17 Pro]], is enabling increasingly sophisticated on-device processing. Research is also focusing on multi-camera fusion, where data from multiple lenses is combined more intelligently to create a single, higher-fidelity image, and on real-time video processing for augmented reality applications. The push for higher resolution sensors continues, but the emphasis is increasingly on how computation can extract more information and quality from existing sensor technology.

⚡ Current State & Latest Developments

A significant debate revolves around the concept of 'authenticity' in computational photography. Critics argue that the extensive post-processing and algorithmic manipulation inherent in these techniques move away from capturing reality as the eye sees it, leading to 'fake' or overly idealized images. The debate intensifies when these technologies are applied to sensitive areas like news reporting or scientific documentation. Another controversy concerns the 'black box' nature of AI-driven image processing; users often have little understanding of how their images are being altered, raising concerns about bias in algorithms that might favor certain skin tones or lighting conditions. Furthermore, the increasing complexity and proprietary nature of these algorithms create vendor lock-in and limit interoperability between different devices and software ecosystems. The environmental impact of the massive computational power required for training and running these models is also a growing concern.

🤔 Controversies & Debates

The future of computational photography is inextricably linked to advancements in AI, sensor technology, and augmented reality. We can expect even more sophisticated scene understanding, enabling cameras to intelligently adjust parameters and apply effects with unprecedented accuracy. Generative AI models may be integrated to not only enhance existing images but also to synthesize entirely new visual elements or even entire scenes in real-time. The development of 'neural cameras' that perform most image processing directly within the sensor itself could lead to further miniaturization and power efficiency. Light field and plenoptic imaging technologies, once confined to research labs, may see broader consumer adoption, offering true post-capture refocusing and 3D effects. The integration with AR glasses and other head-mounted displays will likely drive demand for real-time, high-fidelity computational imaging that seamlessly blends the digital and physical worlds.

Key Facts

- Category

- technology

- Type

- topic